Get a first impression, scheduled soon.

Request a demo to see how NIPO can help you meet your requirements with our smart survey solutions.

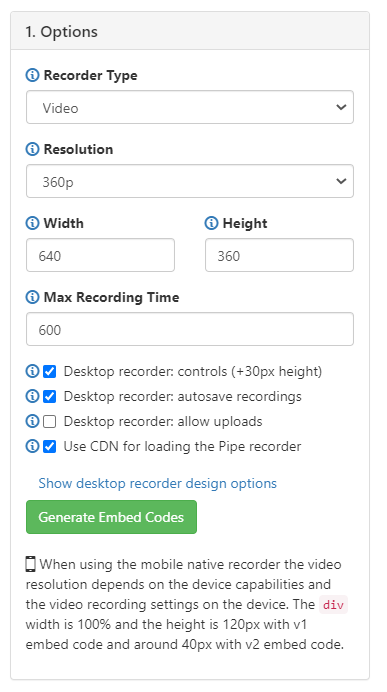

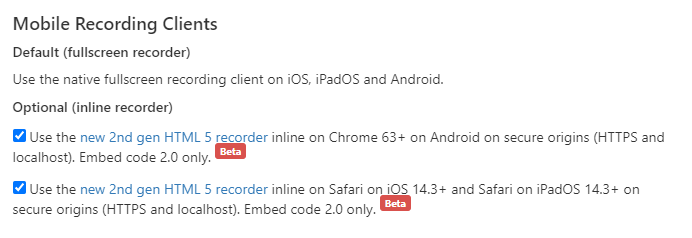

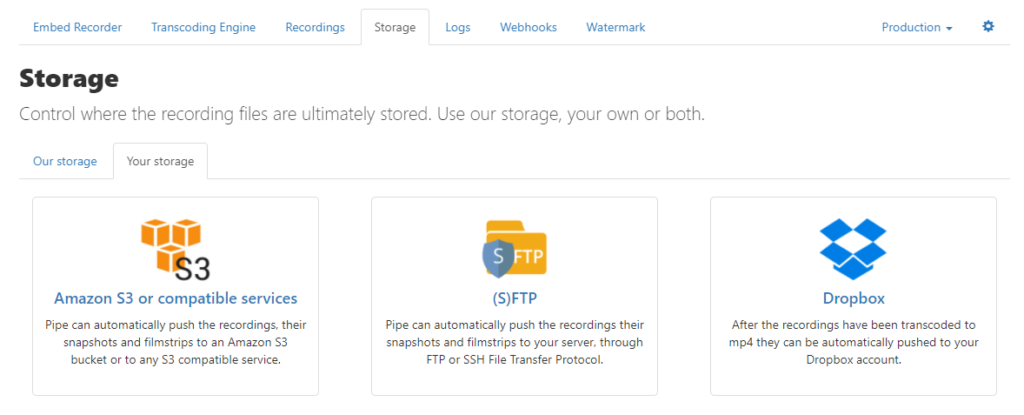

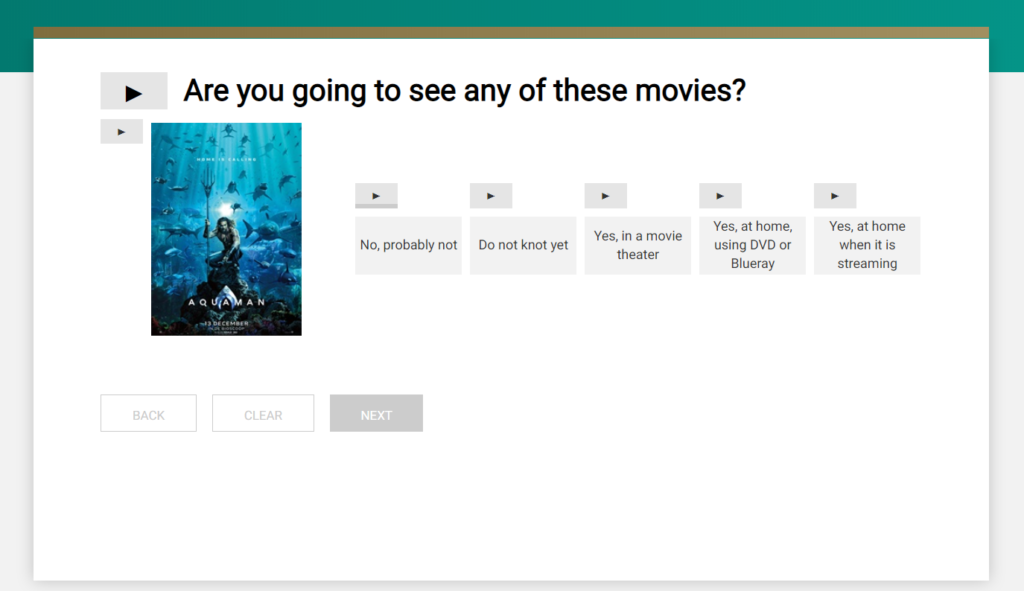

If you’d like to incorporate audio and video responses within Nfield surveys, you can do this by integrating Pipe’s recording platform. Below is an example of how this feature might be useful.

Incorporating Pipe into Nfield surveys requires specific technical expertise. You’ll need someone who is

Gather your resources

| Note: You should set the display size according to the device your respondents will use. It is also possible to make the sizing responsive to a range of device screens. Please refer to Pipe’s own instructions for this. |

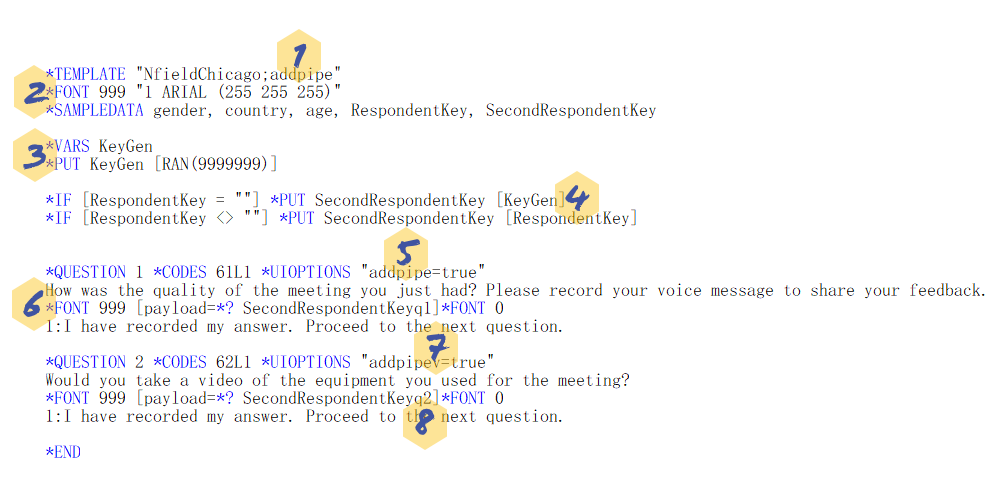

Here is an example of ODIN script that will show an audio recorder and a video recorder. You can also download the script here. See beneath for explanations.

| Note: The higher the random number, the lower the chance of the duplicate SecondRespondentKey being made. You should therefore check this or decide which recordings to pick in the data processing part. |

| Note: Once each recording is uploaded to Pipe, you might want to add *BACK to prevent re-recording or do a check on re-recordings (spotted by same payload). |

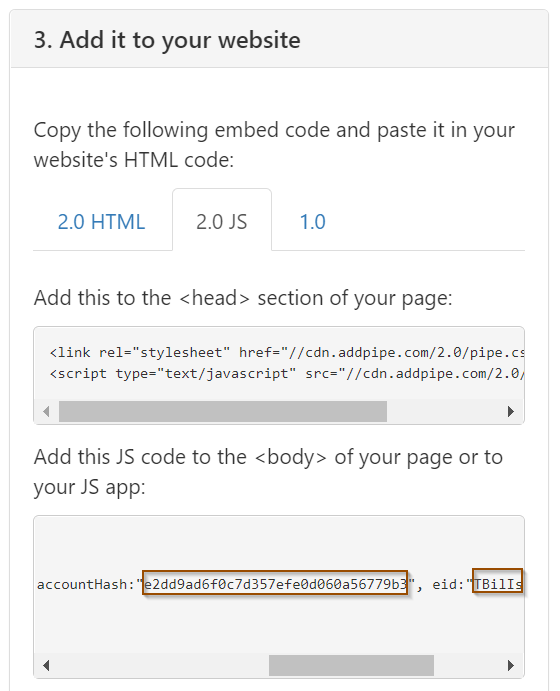

accountHash:" YourAccountHash", eid:"YourEID"YourAccountHash and YourEID with the JavaScript values previously generated in Pipe. (See step 5 in Setting up Pipe.)

| Note: These recordings will be out of scope of Nfield. You should therefore be aware of the location where the data is stored, along with any different security and compliance levels. |

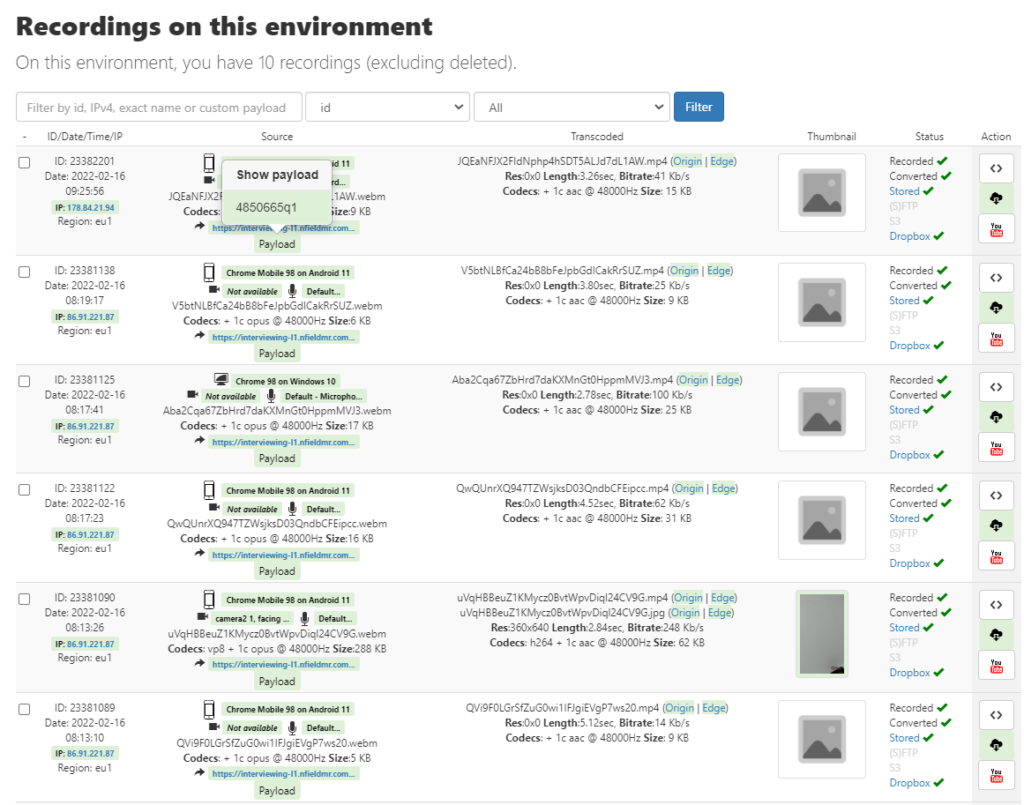

It would be too labor intensive to click every individual record to find the payload information (respondent key and question id) to rename its recording file. But you can automate this task by using a webhook to send the payload and file name to trigger file renaming (optimally in batches). Please refer to the Pipe Webhook documentation for how to use their webhook. Alternatively, you can program something yourself or use other codeless automation tools, such as Microsoft Power Automate, to do the work.

We hope you find these instructions useful. Please feel free to share your feedback with us.

Attractive, easy-to-digest presentation plays an important role in encouraging survey response. Nfield automatically wraps surveys in a professional design that’s consistent with our industry’s highest standards. Most of the time, this provides everything our users need. However, there can be occasions when you want to customize your presentation more extensively.

Experienced scripters with knowledges of common web development techniques (Javascript and CSS) can add extra presentation elements by incorporating their own theme packages (via a zip file).

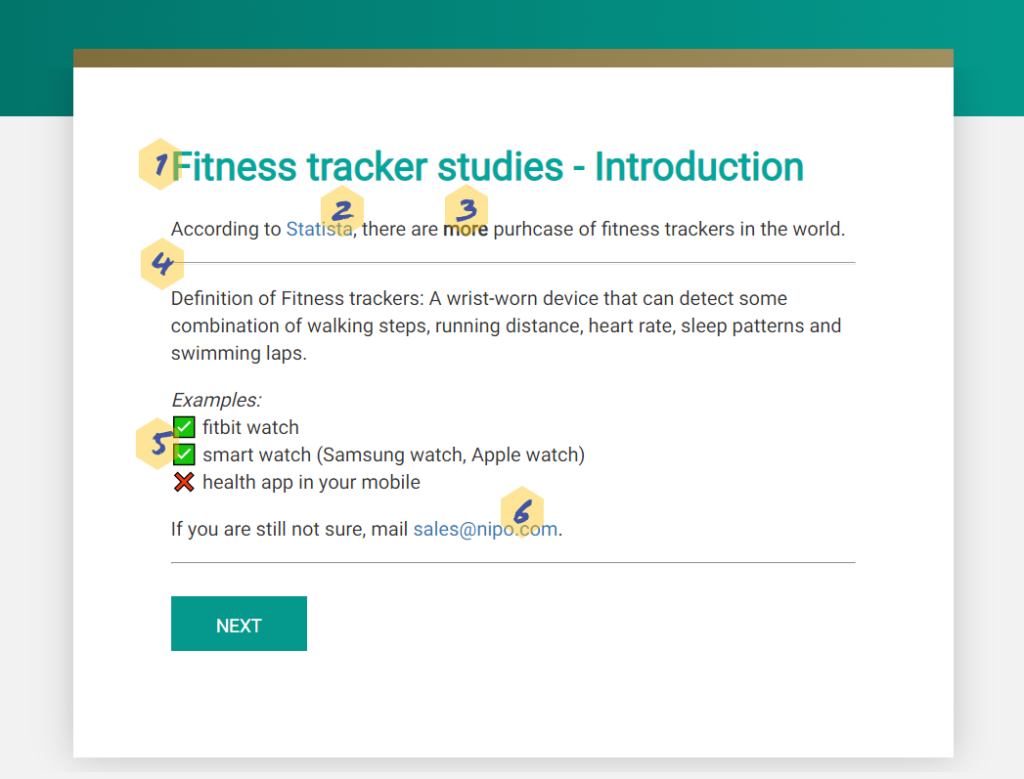

Markdown is a lightweight markup language that can be used to add formatting elements to plaintext documents.1 It is very popular, especially among developers, and is widely used by our own teams and in Nfield documentation.

We’ve added a new pre-packaged theme (markdown.zip) to the theme example section in NfieldChicago documentation for you to use. This includes a third-party library as a Markdown parser, which provides you with additional options for formatting your text using basic Markdown syntax.

Here’s how it works.

Adding headers improves respondents’ experience, by clarifying where they are in the survey.

These are created by simply using # for header 1) and ## for header 2) …etc.

Links are sometimes useful for enabling respondents to reference relevant information, which helps them understand context and increase their trust.

A clickable link that opens in a new tab is created by using [text](url) "optional hover-over text".

There is no need to define bold, italic and bold and italic for every different font.

In Markdown language, this is achieved simply by using _italic text_, __bold text__ and ___bold italic text___. This results in much simpler, easier to read scripts.

If you want to add supplemental information to help respondents answer specific questions, enclosing this between two horizonal lines makes for a good, clear presentation.

A line can be created in Markdown by using ---.

Millennials are more likely to engage in surveys that are presented in a more visual and gamified way. Emojis3 are also a good tool in this regard.

You can now copy and paste emojis to your script. See a list of emojis in Unicode 1.1.

It is usual to provide a means of contact either at the beginning or the end of a questionnaire.

You can now easily incorporate a clickable link by enclosing your email address as shown here <[email protected]>. This will launch the users email program / app.

Instructions for doing this begin at step 7 of 10 Steps to create a theme. If this will be your first time incorporating a theme in Nfield, we recommend watching Academy #6 NfieldChicago theming.

The world of Markdown is quite extensive, with possibilities ranging from standard headers to more advanced options. Please look at theming in NfieldChicago documentation to download and try this out. We also have another example theme available for setting font colors called markup.zip (See bottom right for download link). Please feel free to share any feedback or questions you have about themes with us.

NIPO is proud to announce the opening of our new Mumbai office. In recent years we have seen a strong growth of our business in the Asia Pacific region, something that also was the result of our Nfield China deployment we launched 2 years ago. This major step is now followed by the opening of our new office in Mumbai, that has been in business as of 1 November 2021.

The NIPO Mumbai office will be dedicated to supporting our customers in the Asia Pacific region, with backup from the NIPO Helpdesk in Amsterdam.

NIPO offers remote support to all Nfield users by email (no telephone at the moment, due to all staff working at home for reasons related to Covid), hosts Nfield introduction sessions and on-site training sessions on topics ranging from survey creation to fieldwork management.

Office contact details:

3rd Floor,The ORB

IA Project Road, Andheri

Mumbai 400099, India

We are delighted to announce the opening of this new office and look forward to supporting you from Mumbai!

Nfield’s Voice Over feature is a very useful tool for both Online and CAPI surveys, which can be used to overcome visual impairments (Online), interviewer bias (CAPI) and local dialect differences (CAPI and Online).

By voicing survey questions and the various response options, Voice Over broadens the scope of people who can contribute to answering surveys.

In particular, it provides the following benefits:

Nfield has recently enhanced its Voice Over functionality by making it possible to play audio for questions and answers separately. So users can repeat-play specific answers for clarification, without having to listen to the entire question with all its answers over again.

Go to the page produced by our NfieldChicago team for a simple demonstration of Voice Over in action and instructions for implementation. You’ll also find this in the NIPO Academy video #19.

Setting up Voice Over isn’t difficult. It simply needs some patience for the time taken to link each separate audio file with each part of the question.

If there’s anything else you’d like to know about using Nfield’s Voice Over feature, don’t hesitate to contact us!

Having achieved gold status for the Application Integration competency for the Microsoft Partner Network at the end of last year, we are proud to share the news of NIPO’s success in achieving an additional Silver status for the much desired Security competency.

The competencies Microsoft awards are a strong confirmation that these partners have demonstrated the highest, most consistent capability and commitment to the adoption and implementation of the latest Microsoft technology. Securing a competency is highly dependent on successful certification of technical staff, which implies a deep and continuous investment from both the organization and the individual software developers.

Our Nfield users can benefit directly from NIPOs status within the Microsoft Partner Network. Next to our annual ISO 27001-2013 (Information Security) certification, this is another proof of leading external recognition for the NIPO team and our Nfield platform. This information can be used in client pitches for those projects where Nfield, as Kantar’s destination platform, is used and where the customer would like to understand how, for example, security is addressed.

Securing the Silver status for the Security competency was one of our goals for 2021. Now the NIPO team will continue her efforts to upgrade the Security competency to Gold status.

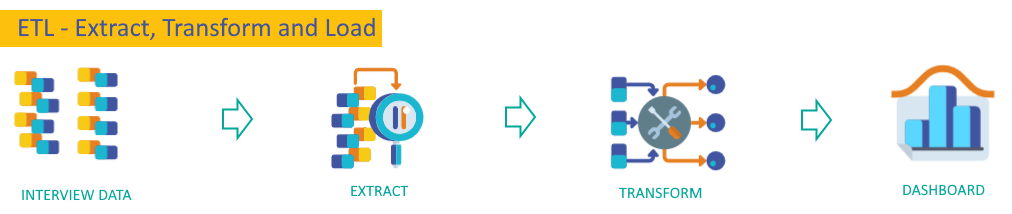

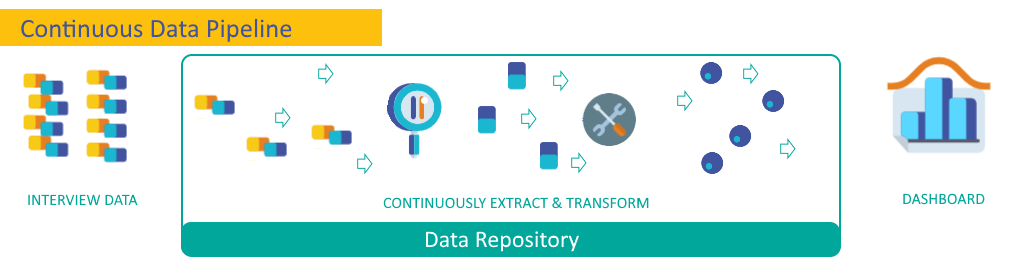

More and more customers have been asking for real-time insights while a survey is still in progress. And now, Nfield is able to deliver these via our new Data Repository feature, which provides a continuous data delivery pipeline straight into your dashboard.

Today’s fast-paced world calls for ever-faster reporting. Nfield Online’s powerful interviewing capability enables a tremendous amount of data to be collected in a short time-frame, without hardware or network limitations. For many customers, the ability to quickly capitalize on this data is also very important.

A good example could be in media production, where survey data can be used to inform outlets of current audience interests. Let’s say, skateboarding suddenly becomes a hot topic at the Olympic Games. The content producer can choose to use this information to switch focus and retain audience attention.

With the Nfield Data Repository feature, insights can be attained immediately, without expensive setup and dependency on IT teams.

Transferring Nfield interview data into insights in customers’ own dashboards is a complex task. Traditionally, the ETL (extract, transform and load) process requires involvement from data processing and IT teams, either during initial setup or each time the process is run, which is a time-consuming overhead.

Now, with the Nfield Data Repository, data from completed interviews is extracted every 10 minutes and directly fed into an engine which converts it into a database format, from where your dashboard / reporting tool can instantly retrieve it.

To make the fast, high-performance engine that keeps the data pipeline flowing affordable to all customers, we offer a scalable pricing system based on usage.

You’ll find the new “REPOSITORIES” section toggled within the Nfield Manager interface. To enable it, please contact your Nfield account manager.

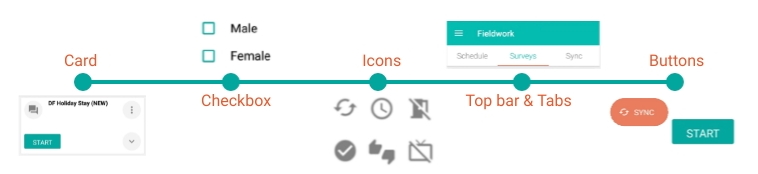

The Nfield CAPI app, used by interviewers to conduct surveys, has recently undergone an extensive makeover to make it more intuitive and, with that, faster to work with. This has resulted in a new version (2.11) which users are now invited to switch to.

To switch to version 2.11, go to the diagnostics tab in the Nfield CAPI app’s settings. For now, these settings allow you to switch between the old and new versions as you prefer. Over time, the old version will be phased out.

Instantly identified by its teal coloring (instead of blue), the new version Nfield CAPI app has been completely redesigned in terms of navigation and how the various screens look and work. From the very start, you’ll see how all the high-level information is shown together on one screen, with the ability to expand any section in one tap. Users thereby have instant access to latest status details without going to a different screen, so can quickly switch between various functional elements.

This simplified navigation provides the shortest path to starting an interview. Throughout the course of a day’s work, interviewers spend less time navigating around the app and enjoy faster access to what they need to know and do. With fewer distractions, work is easier to focus on.

The person behind our new version Nfield CAPI app is our UX (user experience) designer, Deniz. Having worked at NIPO for more than 12 years, including time on our helpdesk, Deniz has a deep understanding of what Nfield CAPI users need. In his UX work, he uses various tools and techniques to generate insights into user behaviors. Putting these two things together, combined with the fact that user interaction technology has evolved a long way since Nfield was first launched in 2013, Deniz realized it was high time to give the Nfield CAPI app a major overhaul. The result is our customers and their interviewers all get to benefit from a more streamlined way of working.

has a deep understanding of what Nfield CAPI users need. In his UX work, he uses various tools and techniques to generate insights into user behaviors. Putting these two things together, combined with the fact that user interaction technology has evolved a long way since Nfield was first launched in 2013, Deniz realized it was high time to give the Nfield CAPI app a major overhaul. The result is our customers and their interviewers all get to benefit from a more streamlined way of working.

The Nfield CAPI app’s new look is based on the Material (https://material.io/) interface guideline that standardizes how elements in a screen should be designed for intuitive interaction. This determines the look and behavior of navigation bars and how cards are used.

Following standardized principles is advantageous because users more quickly feel comfortable with using an app that’s new to them, due to already being familiar with the process via other apps. In psychology, this is known as the Mere-Exposure Effect. So we have a very solid reasoning for adopting design standardization!

Below are the components used in building the new Nfield CAPI app. All of which should be familiar to everyone used to using apps.

Market research interviewers often only work part-time or for short-term periods. The ability to quickly get up-to-speed on how to do the job is very important. Preconditioned familiarity for how to use their tools, in this case the Nfield CAPI app, is therefore very valuable. We believe it only takes two or three uses of the new Nfield CAPI app to feel fully comfortable with it. And, of course, because the new navigation is more streamlined, work can be done more quickly too.

The new version Nfield CAPI app is all about making interviewers’ work easier and faster. Deniz will continue to update it as necessary to improve the user experience even more. The more feedback he gets from you, the better he can make it!

We therefore invite you to tell us what you think about the new version Nfield CAPI app. What do you like about it and what do you feel should be done differently? What new functionality would you like to have?

Contact us at [email protected].

Nfield surpassed a significant milestone on 20 May 2021, smashing through the 100K completed interviews per 24 hours barrier. More importantly, the Nfield platform handled the 104,758 successful completes without showing the slightest level of stress.

The record completion rate was comprised of 86,949 Online surveys and 17,809 CAPI surveys. Of these, 49K were performed on the APAC server, an incredibly high figure which was driven by a single survey in Japan which produced 37,226 completes.

This Japanese survey is, itself, significant for the fact that it ran as an isolated survey using dedicated Azure resources (containers). Using this solution means that the load it generated did not have any impact on other domains and/or surveys. The ability to run isolated interviewing is facilitated via Nfield’s Function app, which has been made possible following intensive collaboration between our team and Microsoft architects. The Function app itself was hit more than 2 million times in 24 hours in relation to this Japanese survey.

Having confirmed Nfield’s ability to comfortably handle this level of traffic, we are looking forward to it becoming a daily norm. It’s good to know that isolation can be a very positive thing! 😊

Fully compliant practices and ISO 27001:2013 certification for our Nfield data collection solution means you can rest assured when it comes to data security. Nfield is a scalable solution with an open architecture that allows you to perform simple to complex surveys with stunning design. Nfield is the cloud survey solution for market research professionals.

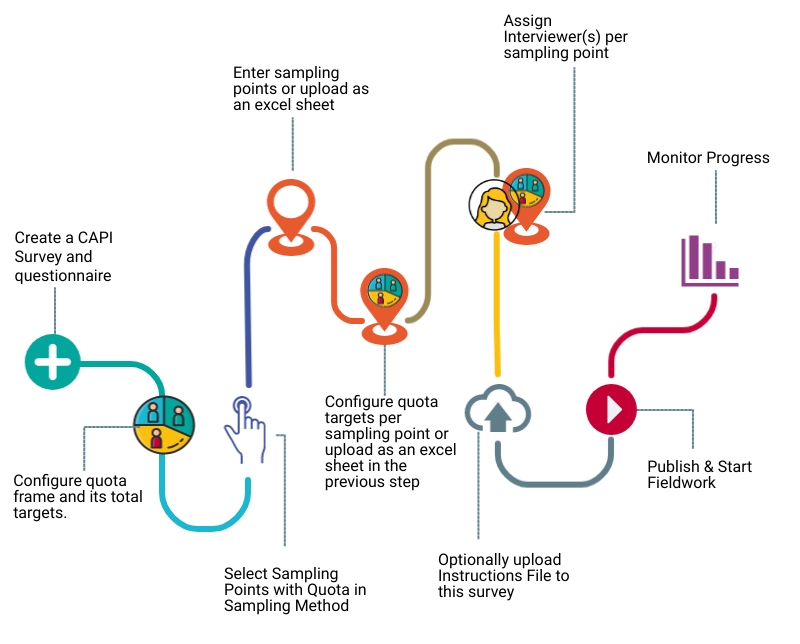

Sampling Points enable researchers to obtain quota-proportioned responses across all individual settings within a CAPI survey. They can be embedded in Quota Target surveys to ensure consistent representation within every different location. As well as providing overall balance, the use of Sampling Points means fieldwork progress can be examined on a setting-by-setting basis.

Sampling Points are the various locations where interviews are carried out within a CAPI survey. They might be exhibition halls, shopping malls, districts, cities, cinemas, hospitals, places of worship, etc. When Sampling Points are applied within a survey, every fieldwork location must be designated as or to a Sampling Point. There cannot be any non-designated interview locations.

Because different sampling points might be different sizes, with access to more or fewer respondents, each one requires its own Quota Target.

For example, a CAPI survey examining behavior of hotel guests would involve face-to-face interviews in variously-sized hotels. To ensure respondents from each key gender and age group segment are proportionately represented at each individual location, the Quota Targets are adjusted according to hotel size. The smaller the hotel, the smaller the quota targets, and vice versa. But the ratios per segment always stay the same. Larger hotels may thereby also call for more interviewers to be assigned.

Sampling Points are easy and intuitive to set up in the Nfield Manager. If you already have any CAPI Sampling Point with Quota surveys in CAPI Manager, you can easily migrate these to the Nfield manager for an improved experience, with just one click.

Sampling Points can be set up in the Nfield Manager either by manual entry or by uploading an excel sheet (complete with Quota Targets). These can also easily be individually updated as necessary.

Note that you have to calculate and enter the different Quota Targets for each Sampling Point. Nfield doesn’t have a facility for automatically adjusting these.

If you have any questions or comments about setting up and using CAPI surveys with Sampling Points in the Nfield Manager, please do not hesitate to contact us.

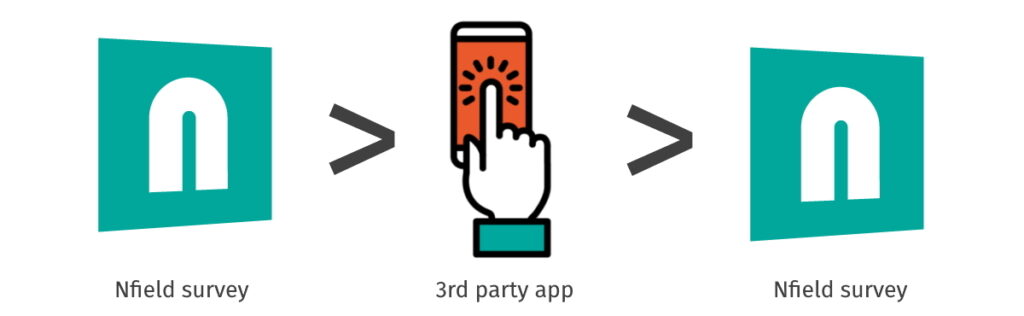

App-in-the-Middle is a feature developed for Nfield, in which control of a survey is temporarily handed over to an external application, so that functionalities not offered within Nfield can be incorporated into survey execution. This is useful, for example, if you want to make use of a Sawtooth module for implementing a complex conjoint.

All you have to do is instruct your Nfield survey when to switch to the external app, and instruct the external app when to switch back to your survey. Making sure, of course, that all the relevant data is handed over during both switching processes.

Within Nfield, this is handled in the sense of pausing and resuming interviews. We’ve accommodated the App-in-the-Middle feature by making it possible to resume paused interviews from an “as is” status, in addition to the usual “as was” status.

Under normal circumstances which don’t involve App-in-the-Middle, a paused Nfield Online or CAWI interview simply returns to its last-known state upon resuming, with all variables and routing restored to the point they were at when paused. This is known as resuming from the “as was”.

Incorporating App-in-the-Middle requires Nfield to accept changes made during the external app’s time in control and resume from this updated situation. This means resuming from the “as is” instead of the “as was”. We’ve introduced this option into Nfield, so you can now incorporate extra functionality via App-in-the-Middle whenever you need to.

This short video shows the basic principles without going into too much detail.

Control over conducting the interview is handed over from Nfield to the external app by using the ODIN command: *ENDST 107. This pauses the interview in Nfield and redirects to the relocation link defined for response code 107. The external app hands back to Nfield by redirecting to the survey URL with its corresponding respondent key and other information.

For the interview to continue in Nfield, some special script constructs must be used to prevent the interview executing *ENDST 107 again. This is done by using either the *INIT block or information that is handed over from the external app. You can find out more about using *INIT blocks in our Academy session video on pausing and resuming surveys.

The relevant information can be handed over to an external app by adding it as query string parameters to the exit link configured for the HandedOver response code (107).

For example:https://aitm.com?respondent={respondentKey}&extra={extraInfo}

This shows the names of ODIN variables between the {}. The variable names are replaced by the variable values before redirection to the external app’s URL.

The external app hands back to Nfield by redirecting to the survey URL. The survey URL must contain the respondentKey. Extra information can be added as query string parameters. The interview will then be resumed (from “as is”) with the ODIN variables having the values now shown in the query string parameters.

For example:https://{startlink}/{respondentKey}?aitmResult=199

This will resume the interview and if an ODIN variable with the name aitmResult is defined it will get the value 199.

Let us know if you want to integrate a specific app via App-in-the-Middle into your surveys. We’ll be happy to help you set up your project.

The market research industry’s three main channels – Online, CATI and CAPI – each have distinct advantages in different situations. Online is fast, cheap and far-reaching. But better quality responses can sometimes be assured via CATI and CAPI channels, which can be backed up with visual, audio and GPS location evidence.

Circumstantial, cultural, demographic and geographic factors also come into play when it comes to which channel is most effective for delivering response. But these can vary within an individual survey and even within an individual sample.

To offer a comprehensive service that keeps all the options open, market research companies ideally need the ability to deploy all three channels, switching between them as necessary in a mixed-mode project.

While a particular survey type is often a preferred choice for a specific market research project, there are often circumstances when the ability to switch modes is beneficial.

Because Online, CAPI and CATI channels are driven by different technical systems, switching between them is often not as simple as it needs to be. Making a survey available by all three systems typically requires duplication of set-up work and additional effort to consolidate the results. For some market research companies, this is a burden to far. For the sake of efficiency, they dedicate themselves to a single channel. Thereby cutting off the many opportunities which come with multi-channel capability.

Here at NIPO, we are all about empowering market research companies to perform better. So we decided to get a grip on the situation by developing Online, CATI and CAPI systems which are fully compatible with each other.

Using our Nfield Online, CATI and CAPI survey solutions, you only have to set up a survey once for it to automatically translate across all three channels. Each respondent’s answers can be accepted via any channel and results are automatically consolidated.

If you’re currently burdened with incompatible systems for multi-mode surveys, switching to Nfield will noticeably boost your productivity.

And if you’ve been limiting yourself to just one or two channels, Nfield provides an easy way to explore the opportunities multi-mode survey capability could bring to your business.

What’s more, because Nfield survey solutions are specifically designed to meet the needs of market research professionals, they’re sully equipped with everything from stunning survey design and advanced scripting options to elaborate sampling, quota handling and much more.

Feel free to contact us to discuss your requirements and ask anything you need to know about Nfield Online, CAPI and CATI.

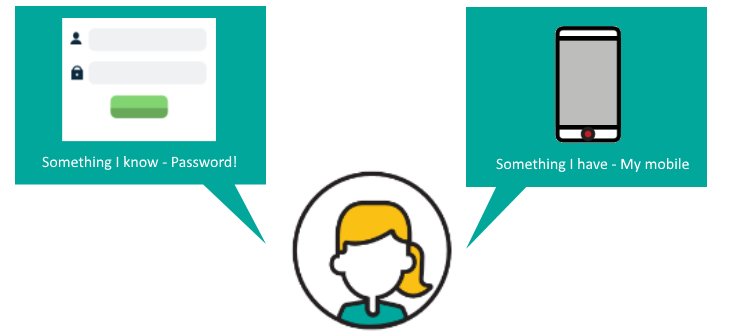

Our article Your Nfield login’s value on the dark web explains why access to your Nfield account is such a tempting prize for hackers. The good news is you can make it almost impossible for them to get in by deploying two-factor authentication. Adding this extra security layer to your username and password login makes your Nfield account more than 99.9% less likely to be compromised, according to research by Alex Weinert, Group Program Manager for Identity Security and Protection at Microsoft1.

This article explains the concept of two-factor authentication (2FA) and its benefits, as well as giving instructions for setting it up and rolling it out in your organization.

You’re probably already familiar with using two-factor authentication to access things such as bank, social media (Facebook, LinkedIn, Instagram) and email accounts. 2FA adds to the first factor – your email address/username and password combination – by asking for a code which can only be obtained via a physical object you have in your possession. This might be a key-like token, an office access card or an SMS message received on your mobile phone. Very highly secured systems may even require a third factor, such as a fingerprint or iris scan.

Nfield accounts secured with two-factor authentication require users to enter a code (a token) generated by a standard authenticator app on a mobile phone. This has the effect of complementing something you know (your username and password) with a code obtained through something you have (your phone). It effectively blocks any hackers who have obtained your username and password from getting into your Nfield account, as they would not be able to retrieve the second factor code from the phone. Your valuable Nfield fieldwork and respondent data is thereby protected from prying eyes.

Different companies have different policies for protecting different types of data. Even if your organization doesn’t require two-factor authentication, your client’s organization might. Having it set up on your Nfield account means you’ll be compliant with every policy or project requirement.

With compliance and IT policies regularly being updated to fend off new security threats, it’s probably only a matter of time before two-factor authentication becomes a standard requirement.

2 https://www.zivver.com/blog/which-type-of-2fa-do-i-need-to-use-under-the-gdpr

3 https://advisera.com/27001academy/blog/2017/01/16/how-two-factor-authentication-enables-compliance-with-iso-27001-access-controls/

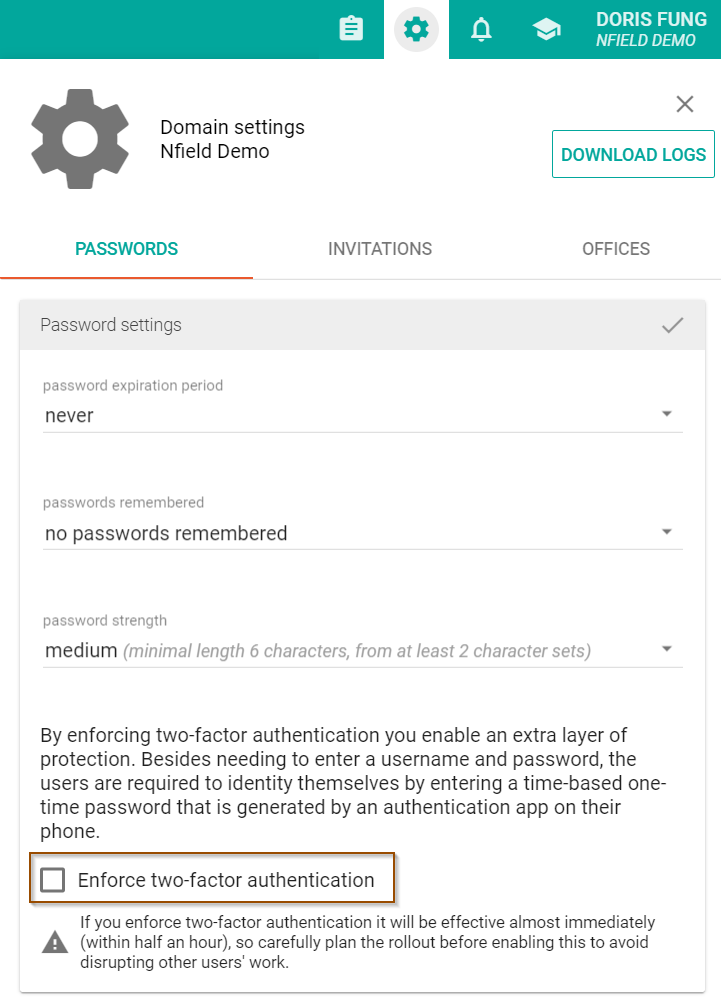

Enabling this feature across an Nfield domain can only be done by domain administrators or local domain managers. The instruction to enable is located in the password policy page in the domain settings.

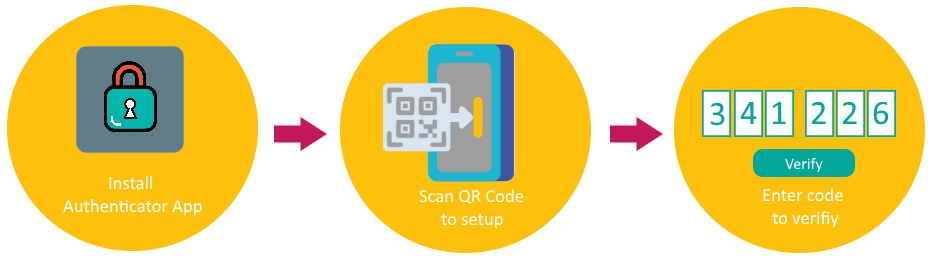

After enabling two-factor authentication, follow the on-screen instructions for setting up two-factor configuration. You’ll need to start by selecting and setting up an authenticator app, such as Microsoft Authenticator, Google Authenticator or others. Next, use the app to scan the QR code provided by Nfield. Once all is correctly configured, the app will provide a code which needs to be entered into Nfield to complete the two-factor authentication. It is as simple as that! Every time you log in to Nfield, you go through the same process, getting a new code each time.

Two-factor authentication will become effective across your Nfield domain within 30 minutes of being enabled. Any logged-in users will get the same prompt asked them to complete their configuration setup. Other users will get this prompt when they try to log in. Using public API (https://www.nipo.com/api-what-researchers-need-to-know) is excepted from using two-factor authentication.

To minimize disruption to your team, please plan this carefully. We recommend you consider the following:

Whether or not you feel you need it right now, we highly recommend enabling two-factor authentication for your Nfield domain, to enjoy better security protection and gain more benefits from Nfield. If you have any questions, please contact our helpdesk. And, of course, we’re always curious to get your feedback via your account manager.

Invisible to standard internet browsers and search engines, the Dark Web is a place where users anonymously access content which is either illegal or related to illegal activities. This includes the lucrative marketplace for stolen login details, which are bought by criminals who are able to monetize the data and permissions they unlock. Among these, your Nfield login is a desirable prize, as it opens the door to a large pool of personal information.

How desirable? This can be worked out by taking a look at trading prices for the items your Nfield data helps unlock.

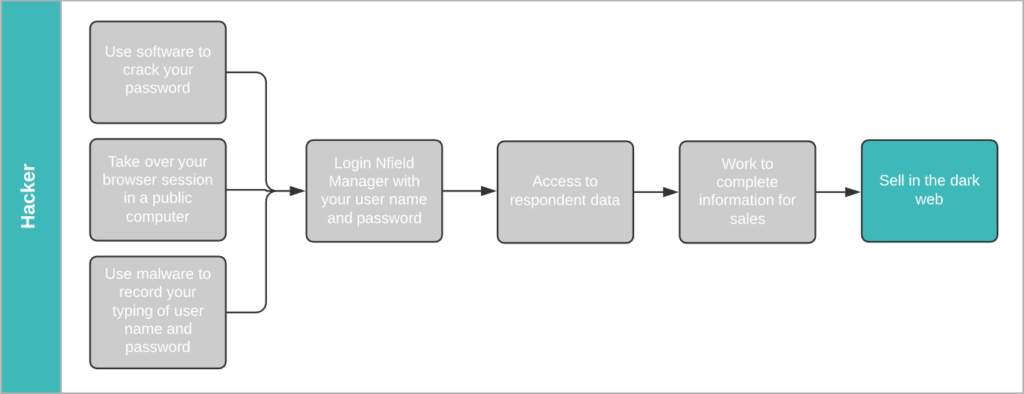

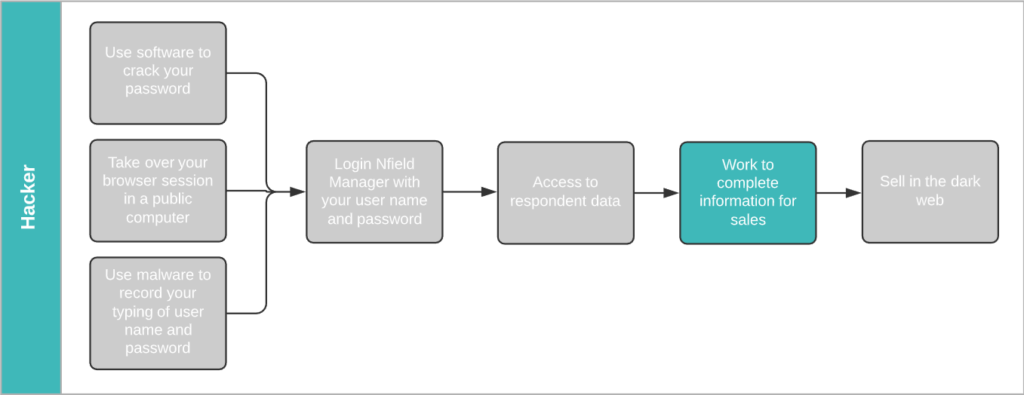

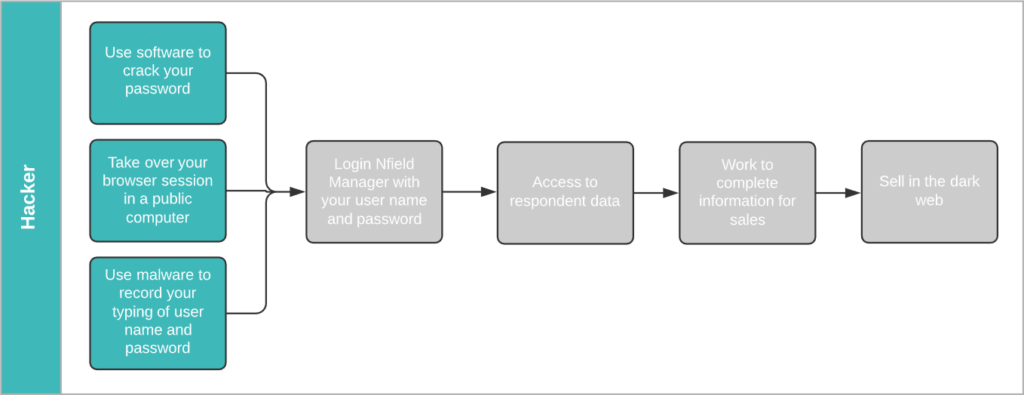

The illustration below shows the process a hacker would go through to harvest your survey participants’ login details from Nfield and make them saleable. If we work backwards from the end prize, we get an idea of the financial temptation.

According to Dark Web Price Index 2020, a stolen Gmail login (email address/username + password) sells for $155.73. A hacker who obtains your Nfield Manager login name and password gains access to all the sample information in all your surveys. That’s likely to include a high percentage of valid Gmail addresses. To make these saleable on the dark web, all the hacker now has to do is get the corresponding passwords.

Hackers commonly obtain passwords via phishing emails and database matching. Both of which are automated processes that easily deliver the goods.

Because login to Google accounts is protected by limitation on incorrect password attempts, there’s no point in trying to obtain passwords by endless guessing. Phishing, whereby a hacker approaches you via fake emails pretending to be from a trusted source and asks you to sign in to a related account – thereby revealing your password – is a far more successful method.

Every successful attempt can reap multiple payouts for the hacker. According to LastPass, 65% of people re-use passwords. A lucky hacker could net themselves an additional $74.50 if the same login details give access to a Facebook account and $50 each for Twitter and Instagram accounts1.

Another popular commodity traded on the dark web is credit card details, which sell for between $12 and $20 each.

Credit card details are composed of two parts. The first part is the info displayed on the card itself – credit card number, expiry date, CVV number (on the back) and holder name. These are plentifully available in a nicely formatted database, obtained through skimming devices planted in ATM machines, train ticket machines and are even sold by unscrupulous retailers who’ve been paid via card swipe machines.

The second part is the card owner zip code (derived from address), city, address, email and phone. Details which are commonly held in survey sample data! Armed with this information, a hacker can easily run a database matching tool between a purchased credit card database and your sample data to obtain complete sets of credit card details, ready to sell.

If you think this seems a lot of work for relatively modest returns, think again. Phishing and database matching are automated processes which take no more effort than a few clicks. Easy money.

Hackers have all the tools they need to crack your password, take over your browser session and use malware to get hold of your Nfield login.

Cracking passwords using software is a basic skill in the hacking world. It’s simply a matter of time. Did you know 90% of passwords can be cracked in less than six hours2.

Using shared computers in public spaces, such as cafés, airport lounges or even client meeting rooms, can lay out the welcome mat for hackers. You never know who’s hanging around waiting to inspect your activity in the event of you rushing off without logging out, or through having installed password capture software.

At the time of writing, a great many of us are working from home due to the COVID-19 pandemic. In this situation, the companies we work for usually have very limited control over the equipment we’re using for both work and recreation. It’s a vulnerability that makes it easier to implant malware that records your key strokes and can hack into your Nfield account.

2 https://www.servalsystems.co.uk/6-facts-about-passwords-that-will-make-you-think/

Although the sales values for individual login details and credit cards aren’t particularly high, they easily add up. How much do you think all the sample data held in your Nfield account is potentially worth to a criminal? Selling just one Gmail login per day would net a hacker over $56,000 a year. That’s too tempting to ignore. Which is why Nfield is now equipped with two-factor authentication, to keep hackers out of your account, even if they have cracked your password.

At the very end of 2020, NIPO further enhanced its status within the Microsoft Partner Network by adding a third Gold Competency. Complementing already-held Gold competencies for Application Development and Cloud Platform, the addition of Gold status for Application Integration means NIPO’s team has now received the highest possible recognition from Microsoft for its proficiency in three separate areas.

This achievement underscores NIPO’s ability to provide its customers with a cutting-edge SaaS Azure Cloud based platform, leveraging the latest Microsoft technologies and fully meeting Microsoft’s standards. NIPO has earned its Gold competencies by demonstrating “best-in-class” ability and commitment to meeting Microsoft customers’ evolving needs in today’s mobile-first, cloud-first world.

Jeroen Noordman, Managing Director of NIPO: “The Application Integration competency is highly relevant to NIPO. Our Nfield platform is open by nature, so we have been very eager to demonstrate our comprehensive understanding of all the opportunities and challenges this topic presents. Attaining this third Gold Competency is also proof of our ongoing commitment to continuous investment in keeping our NIPO team members’ knowledge fully up-to-date. This recognition from the Microsoft Partner Network ecosystem benefits both NIPO and our customers. We are very proud to announce this latest achievement and look forward to having more exciting news to share relating to our MPN involvement during 2021”.

Gavriella Schuster, corporate vice president at One Commercial Partner (OCP) at Microsoft Corp.: “Achieving Gold Competency confirms that partners have demonstrated the highest, most consistent capability and commitment to the latest Microsoft technology. These partners have deep expertise that positions them at the top of our partner ecosystem, with a proficiency which can help customers drive innovative solutions”.

Surveys contain valuable, and sometimes sensitive, information. It’s therefore essential to restrict access to certain parts of surveys to those who really need it, in order to do their jobs. This works by assigning users with specific roles which only allow access to designated areas and functionality.

Nfield surveys incorporate a number of default roles: Domain Administrator, Power User, Regular User, Scripter, Supervisor, Limited User and Quota Manager. Each of which allows an appropriate scope of access.

For example, Scripters can create questionnaires and publish surveys for testing purposes. But cannot publish surveys to go live, send out email invitations or access survey data.

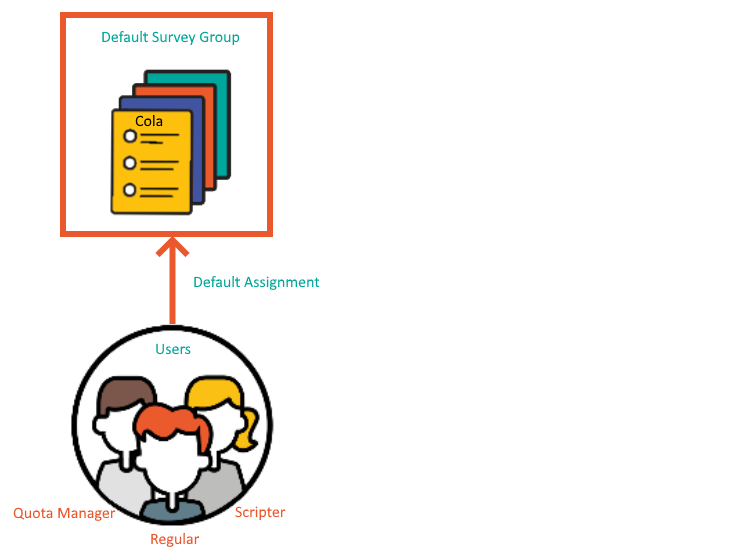

By default, each role is granted their access rights for every survey within a domain. But there might be reasons to also restrict which surveys each user can access.

For example:

Nfield survey access rights can be customized to fit your needs. This requires API implementation and is an add-on to your Nfield subscription.

To explain the set-up, we need to look at how Survey Groups work.

A Survey Group is a container for surveys in which users are assigned their access. By default, existing and newly created surveys are put under the Default Survey Group, so your existing and newly created users are given the access specified in this Default Survey Group.

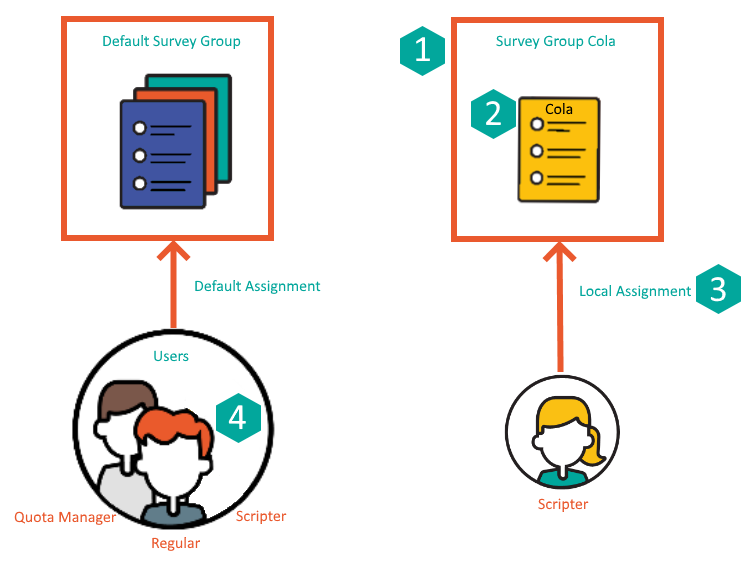

To demonstrate, let’s say you have a survey about Cola, which is highly confidential and access must therefore be restricted to an individual Scripter. This would need to be set up as shown below, with a new container called “Survey Group Cola” that contains this Cola Survey and only specifies access to your designated Scripter.

In terms of API calls, this breaks down into the following steps:

| Step | API call |

| 1) Create the group “Survey Group Cola”. | POST v1/SurveyGroups |

| 2) Move the Cola survey from “Default Survey Group” to “Survey Group Cola”. | PUT v1/Surveys/{surveyId}/SurveyGroup |

| 3) Assign the Scripter to “Survey Group Cola”. | PUT v1/SurveyGroups/{surveyGroupId}/AssignLocal |

| 4) Unassign the Scripter from “Default Assignment”.* | PUT v1/SurveyGroups/{surveyGroupId}/UnassignLocal |

*remarks: One user can be assigned to multiple survey groups. So you may skip this step to keep the default survey group access according to your wish.

The following illustration demonstrates this using Postman for executing the API calls.

As described in ISO 27001, “Put simply, access control is about who needs to know, who needs to use and how much they get access to.” It’s something people are always talking about on our own work floor. This Nfield feature gives you the control you need to restrict access to survey rights as appropriate, to safeguard data security while enabling people to do their jobs.

Request a demo to see how NIPO can help you meet your requirements with our smart survey solutions.